900-21010-0000-000 NVIDIA H100 80GB PCIE - Graphics Card - New Retail

The NVIDIA® H100 Tensor Core GPU delivers unprecedented acceleration to power the world’s highest-performing elastic data centres for AI, data analytics, and high-performance

computing (HPC) applications. NVIDIA H100 Tensor Core technology supports a broad range of math precisions, providing a single accelerator for every compute workload. The NVIDIA H100 PCIe supports double precision (FP64), single-precision (FP32), half precision (FP16), and integer (INT8) compute tasks.

NVIDIA H100 Tensor Core graphics processing units (GPUs) for mainstream servers comes

with an NVIDIA AI Enterprise five-year software subscription and includes enterprise support,

simplifying AI adoption with the highest performance. This ensures organizations have access

to the AI frameworks and tools needed to build H100 accelerated AI workflows such as

conversational AI, recommendation engines, vision AI, and more.

Activate NVIDIA AI Enterprise license for H100 at: https://www.nvidia.com/activate-h100/

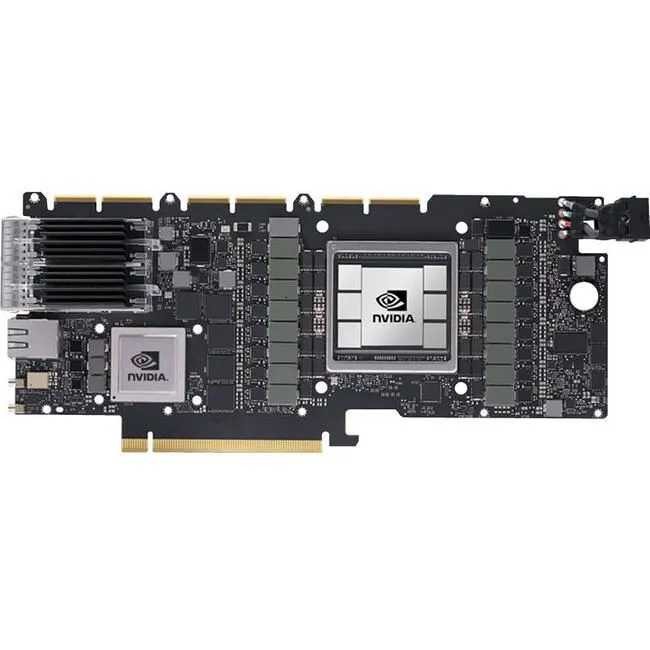

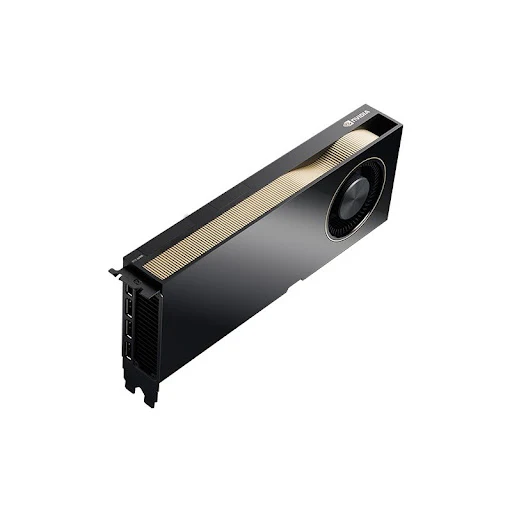

The NVIDIA H100 card is a dual-slot 10.5 inch PCI Express Gen5 card based on the NVIDIA

Hopper™ architecture. It uses a passive heat sink for cooling, which requires system airflow to

operate the card properly within its thermal limits. The NVIDIA H100 PCIe operates

unconstrained up to its maximum thermal design power (TDP) level of 350 W to accelerate

applications that require the fastest computational speed and highest data throughput. The

NVIDIA H100 PCIe debuts the world’s highest PCIe card memory bandwidth greater than 2,000 gigabytes per second (GBps). This speeds time to solution for the largest models and most massive data sets.

The NVIDIA H100 PCIe card features Multi-Instance GPU (MIG) capability. This can be used to

partition the GPU into as many as seven hardware-isolated GPU instances, providing a unified

platform that enables elastic data centers to adjust dynamically to shifting workload demands.

As well as one can allocate the right size of resources from the smallest to biggest multi-GPU

jobs. NVIDIA H100 versatility means that IT managers can maximize the utility of every GPU in

their data centre.

NVIDIA H100 PCIe cards use three NVIDIA® NVLink® bridges. They are the same as the bridges used with NVIDIA A100 PCIe cards. This allows two NVIDIA H100 PCIe cards to be connected to deliver 900 GB/s bidirectional bandwidth or 5x the bandwidth of PCIe Gen5, to maximize application performance for large workloads.

The NVIDIA H100 Tensor Core GPU delivers exceptional performance, scalability,

and security for every workload. With NVIDIA® NVLink® Switch System, up to 256

H100 GPUs can be connected to accelerate exascale workloads, while the dedicated

Transformer Engine supports trillion-parameter language models. H100 uses

breakthrough innovations in the NVIDIA Hopper™ architecture to deliver industry-

leading conversational AI, speeding up large language models by 30X over the

previous generation.

Ready for Enterprise AI?

NVIDIA H100 GPUs for mainstream servers come with a five-year software

subscription, including enterprise support, to the NVIDIA AI Enterprise software

suite, simplifying AI adoption with the highest performance. This ensures

organizations have access to the AI frameworks and tools they need to build H100-

accelerated AI workflows such as AI chatbots, recommendation engines, vision AI,

and more. Access the NVIDIA AI Enterprise software subscription and related

support benefits for the NVIDIA H100.

Securely Accelerate Workloads From Enterprise to Exascale

NVIDIA H100 GPUs feature fourth-generation Tensor Cores and the Transformer

Engine with FP8 precision, further extending NVIDIA’s market-leading AI leadership

with up to 9X faster training and an incredible 30X inference speedup on large

language models. For high-performance computing (HPC) applications, H100

triples the floating-point operations per second (FLOPS) of FP64 and adds

dynamic programming (DPX) instructions to deliver up to 7X higher performance.

With second-generation Multi-Instance GPU (MIG), built-in NVIDIA confidential

computing, and NVIDIA NVLink Switch System, H100 securely accelerates all

workloads for every data center from enterprise to exascale.

Product Information

Product Information

Shipping & Returns

Shipping & Returns

Description

The NVIDIA® H100 Tensor Core GPU delivers unprecedented acceleration to power the world’s highest-performing elastic data centres for AI, data analytics, and high-performance

computing (HPC) applications. NVIDIA H100 Tensor Core technology supports a broad range of math precisions, providing a single accelerator for every compute workload. The NVIDIA H100 PCIe supports double precision (FP64), single-precision (FP32), half precision (FP16), and integer (INT8) compute tasks.

NVIDIA H100 Tensor Core graphics processing units (GPUs) for mainstream servers comes

with an NVIDIA AI Enterprise five-year software subscription and includes enterprise support,

simplifying AI adoption with the highest performance. This ensures organizations have access

to the AI frameworks and tools needed to build H100 accelerated AI workflows such as

conversational AI, recommendation engines, vision AI, and more.

Activate NVIDIA AI Enterprise license for H100 at: https://www.nvidia.com/activate-h100/

The NVIDIA H100 card is a dual-slot 10.5 inch PCI Express Gen5 card based on the NVIDIA

Hopper™ architecture. It uses a passive heat sink for cooling, which requires system airflow to

operate the card properly within its thermal limits. The NVIDIA H100 PCIe operates

unconstrained up to its maximum thermal design power (TDP) level of 350 W to accelerate

applications that require the fastest computational speed and highest data throughput. The

NVIDIA H100 PCIe debuts the world’s highest PCIe card memory bandwidth greater than 2,000 gigabytes per second (GBps). This speeds time to solution for the largest models and most massive data sets.

The NVIDIA H100 PCIe card features Multi-Instance GPU (MIG) capability. This can be used to

partition the GPU into as many as seven hardware-isolated GPU instances, providing a unified

platform that enables elastic data centers to adjust dynamically to shifting workload demands.

As well as one can allocate the right size of resources from the smallest to biggest multi-GPU

jobs. NVIDIA H100 versatility means that IT managers can maximize the utility of every GPU in

their data centre.

NVIDIA H100 PCIe cards use three NVIDIA® NVLink® bridges. They are the same as the bridges used with NVIDIA A100 PCIe cards. This allows two NVIDIA H100 PCIe cards to be connected to deliver 900 GB/s bidirectional bandwidth or 5x the bandwidth of PCIe Gen5, to maximize application performance for large workloads.

The NVIDIA H100 Tensor Core GPU delivers exceptional performance, scalability,

and security for every workload. With NVIDIA® NVLink® Switch System, up to 256

H100 GPUs can be connected to accelerate exascale workloads, while the dedicated

Transformer Engine supports trillion-parameter language models. H100 uses

breakthrough innovations in the NVIDIA Hopper™ architecture to deliver industry-

leading conversational AI, speeding up large language models by 30X over the

previous generation.

Ready for Enterprise AI?

NVIDIA H100 GPUs for mainstream servers come with a five-year software

subscription, including enterprise support, to the NVIDIA AI Enterprise software

suite, simplifying AI adoption with the highest performance. This ensures

organizations have access to the AI frameworks and tools they need to build H100-

accelerated AI workflows such as AI chatbots, recommendation engines, vision AI,

and more. Access the NVIDIA AI Enterprise software subscription and related

support benefits for the NVIDIA H100.

Securely Accelerate Workloads From Enterprise to Exascale

NVIDIA H100 GPUs feature fourth-generation Tensor Cores and the Transformer

Engine with FP8 precision, further extending NVIDIA’s market-leading AI leadership

with up to 9X faster training and an incredible 30X inference speedup on large

language models. For high-performance computing (HPC) applications, H100

triples the floating-point operations per second (FLOPS) of FP64 and adds

dynamic programming (DPX) instructions to deliver up to 7X higher performance.

With second-generation Multi-Instance GPU (MIG), built-in NVIDIA confidential

computing, and NVIDIA NVLink Switch System, H100 securely accelerates all

workloads for every data center from enterprise to exascale.